Your AI agents are already acting. The question is whether you’re in control.

There’s a quiet crisis unfolding inside enterprise environments right now.

It’s not headline-grabbing, there’s no single breach to point to, but the numbers are hard to ignore: according to Okta’s AI at Work 2025 report, 91% of organizations are already using AI agents. Of those, 80% have experienced unintended agent behavior, and 23% have reported credential exposure via agents.

Perhaps most alarming: 44% have no governance in place whatsoever.

AI agents are already in your environment. They’re accessing data, calling APIs, executing workflows, and making decisions at speed. The question isn’t whether to deploy AI, that decision has already been made, often without you or your IT team in the room.

The question is whether you’re actually in control of what those agents are doing.

Most organizations aren’t.

And the reason isn’t a lack of security awareness, but an architectural gap that most security programs just weren’t built to address.

The Problem Traditional IAM Can’t Solve

Modern Identity and Access Management (IAM) was built for a specific model: human users, authenticating through browsers, accessing defined applications. It’s a model that served us well for a decade. But AI agents break every assumption that model rests on.

Agents aren’t tied to specific individuals. They don’t log in through federated SSO. They authenticate using API tokens, JWT credentials, and service accounts that live entirely outside your enterprise Identity Provider’s visibility. They spin up through CI/CD pipelines, often without human review, and can be decommissioned just as quickly. Their actions span dozens of backend systems in seconds and they operate continuously instead of in discrete sessions, in persistent, autonomous flows that look nothing like human access patterns.

The result is a growing class of privileged activity that your enterprise IdP was never designed to govern. Organizations are now operating an agentic enterprise where agents can access the same SaaS tools your employees use daily, except they work much faster and access far more data. A single compromised agent can exfiltrate data or make changes across every connected application faster than any human attacker could.

Six specific risk vectors define where that gap creates real exposure:

- Unauthorized data access: agents fetch data that users were never authorized to see, bypassing role-based controls entirely

- Stale or over-provisioned permissions: old tokens and roles grant agents excessive privileges that are never reviewed or revoked

- Compliance and audit gaps: agent actions aren’t tied to real user identities or logged consent, creating audit failures and regulatory exposure

- Weak authorization: coarse-grained access rules allow any agent to execute sensitive actions regardless of context

- Secrets and credential leakage: API keys surface in agent prompts and environment variables, where they can be exfiltrated

- Privacy and data-leak exposure: personal and sensitive data leaves secure zones and reaches unauthorized systems

These risks compound quickly as natural language interfaces make it trivially easy for any employee to build, connect, and run agent-based applications. Shadow AI – unsanctioned agents running entirely outside IT visibility – is already a reality in most enterprises. By the time security teams discover these agents, the attack surface has already grown.

The Three Questions Every Security Leader Must Answer

The blueprint for the secure agentic enterprise, introduced by Okta, frames the governance challenge as three operational questions that every security and IT leader must be able to answer before agents scale beyond control:

Where are my agents? Most organizations can’t answer this accurately. The agents employees spin up in a browser, the ones running quietly in desktop environments, the third-party AI tools connected to your SaaS stack, are operating without IT’s knowledge. Visibility isn’t aspirational here. It’s the minimum standard for operating in production.

What can they connect to? Once you can see an agent, you need to map every resource it can reach and enforce access policies around those connections. MCP servers, SaaS applications, agent-to-agent handshakes, service accounts, vaulted credentials, each connection point is a potential blast radius. A single compromised agent that can chain access across your environment at machine speed is a very different threat than a compromised user account.

What can they do? Knowing where agents are and what they connect to isn’t enough if you can’t control and cut off what they actually do. You need runtime enforcement, a kill switch for immediate revocation, human-in-the-loop approval for sensitive actions, and complete audit logs flowing to your SIEM.

Organizations that can’t answer these questions are running blind, and when the auditor asks, when the board asks, when a breach happens, “We don’t know” is not a defensible position.

Identity Is the Answer

Identity is the key to AI security.

Every AI agent must be treated as a non-human identity: discoverable, authenticated, governed, and auditable. The enterprise IdP must become the control plane for all agent activity, not an afterthought.

This is the identity-first philosophy, and it changes the architecture conversation fundamentally. The answer isn’t to bolt security onto AI deployments after the fact, nor run a separate governance program for agents while your core IAM remains unchanged. It’s to design identity security into the AI agent lifecycle from day one, not after the breach happens.

In practice, this means treating every AI agent as a first-class identity with the same governance applied to your most privileged human users:

- Every agent enrolled in the enterprise IdP with a named human owner accountable for its behavior

- Credentials vaulted and time-bound with zero long-lived tokens in production

- Access scoped to the minimum required for each specific task, enforced at runtime

- Every action logged, attributable, and available for audit

- Continuous monitoring with automated remediation for anomalies

This is what Zero Trust looks like when it’s applied to non-human identities, and it’s the only architecture that scales as your agent population grows from dozens to hundreds or thousands.

What This Looks Like in Practice

The most common mistake enterprises make when they try to govern AI agents isn’t moving too slowly, it’s actually jumping ahead to the wrong phase. Security teams feel the authorization problem acutely: agents with too much access, credentials sprawling across systems, so they reach for access controls first. They tighten permissions, rotate tokens, and move on. However, the discovery problem, the one that was always upstream of the authorization problem, never gets solved – agents keep proliferating, shadow AI keeps accumulating, and the controls that were just implemented only govern the agents someone already knew about.

The sequence matters as much as the controls themselves.

Visibility has to come first

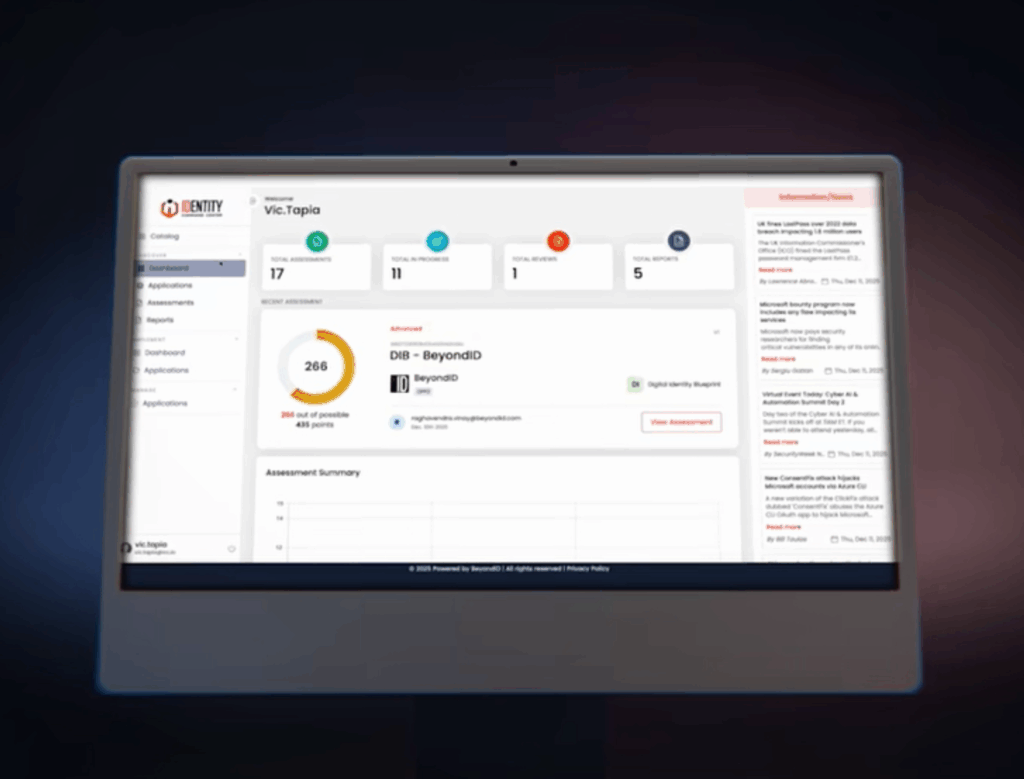

Not as a one-time audit, but as a continuous capability. Before you can govern an agent, you need to know it exists, where it was built, what systems it touches, what credentials it’s carrying, and who is accountable for its behavior. This is where something like Okta for AI Agents does its first meaningful work: it imports agents from external platforms and discovers shadow agents. Today, that’s by monitoring browser OAuth grants and enriching them with user context. Most enterprises, when they do this work, find a landscape far more complex than their official AI inventory suggests.

Registration converts visibility into accountability

An agent that exists in your environment but doesn’t exist in your IdP is ungovernable by definition. With Okta for AI Agents, every agent – known or unknown – is enrolled in Universal Directory as a named, unique identity, with a human owner (or group of owners) assigned.

Authorization is where policy enforcement gets precise

Authorization defines what an agent can access and what it’s allowed to do once it has that access. Okta for AI Agents replaces hardcoded credentials and standing access with scoped, short-lived tokens, issued only for what the agent needs, and only for as long as it needs it. It governs agent access to APIs, applications, service accounts, secrets, and MCP servers through controlled consent and token exchange flows with continuous policy enforcement.

Continuous governance enforces access controls across the full lifecycle

With Okta for AI Agents, access requests and access certifications for AI agents brings agents into the same standardized certification workflows used for SaaS applications, so agent owners, managers, and security admins can regularly review, approve, or revoke user access to AI agents with full auditability and automatic enforcement. Rich audit logs provide detailed information and telemetry on all AI agent activity. including tool calls, authorization decisions, and access attempts. Customers can optionally stream these insights directly to their SIEM for further investigation. This gives security teams the runtime visibility needed to detect anomalous agent behavior, enforce policies in real time, and revoke the agent’s access if it behaves unexpectedly. When an agent needs to be shut down, the agent can be deactivated through a single kill switch that automatically revokes access across every connected system instantly.

The Structural Problem

As enterprises engage strategy firms to define their AI vision, there is a growing opportunity to streamline how AI services are delivered. Traditionally, aligning the initial AI strategy with the necessary identity governance and security architecture can involve multiple phases and teams. By proactively integrating deep Okta platform expertise alongside the systems integration process from day one, enterprises can create a cohesive delivery model that enhances security, reduces complexity, and accelerates successful AI deployment.

This is the problem BeyondID and Nexera are partnering to solve.

Nexera operates at the AI Platform layer, designing, building, and running production-grade AI systems, from initial strategy through custom agent development and ongoing managed operations. BeyondID operates at the Trust Layer, across every agent, model, and workflow with least-privilege access controls, and continuous monitoring. Okta is the platform of choice.

What that means operationally is that the handoff points that typically introduce delay, misalignment, and security gaps. Identity governance isn’t a downstream workstream that begins after the AI system is built. It’s an architectural input that shapes how the system is built, present at the design stage, validated at the build stage, and operational at launch.

As Arun Shrestha, Founder of BeyondID, put it: “Enterprises are under enormous pressure to deploy AI quickly, but speed without governance is a liability. Now, organizations no longer have to choose between moving fast and staying secure.”

Tom Wisnowski, CEO and Founder of Nexera, framed what the integrated model makes possible: “AI is only as powerful as the trust placed in it. With BeyondID, we can now offer our clients the full stack – from intelligent systems to the identity infrastructure that makes those systems safe to operate at enterprise scale.”

“Okta and our trusted ecosystem of partners, like BeyondID, empower global enterprises to securely adopt AI and agentic workflows.” added Alex Valenzuela, Vice President, Americas Partners & Alliances at Okta. “By leveraging Okta for AI Agent’s and their own deep industry expertise, our partners ensure every agent is properly governed and managed, protecting our customers’ sensitive assets while enabling rapid innovation and business value.”

That full stack, delivered as a single integrated motion, is what gets enterprises from strategy to production in 90 days rather than quarters, and what ensures the system that reaches production is governed from the inside out, not patched from the outside in.

The Window Is Now

The organizations that will navigate the agentic era successfully are not the ones that deployed AI the fastest. It’s the ones that established identity governance for their AI agents before agent sprawl made the problem intractable.

That window is still open, but it’s quickly narrowing. Every week that agents operate outside your identity security fabric is another week of audit exposure, compliance risk, and potential credential compromise. The good news is that the architecture to solve this exists today, the tooling is mature, and the methodology is proven.

The question is whether you start now, before the first incident forces your hand — or after.

BeyondID is a leading AI-powered Managed Identity Solutions Provider and a KeyData Cyber Family Company. In strategic partnership with Nexera, BeyondID delivers end-to-end Secure AI services — from AI Identity Readiness Sprints through 90-Day Secure Agent Launches and continuous managed operations — powered by Okta’s Identity Security Platform.

Ready to understand where your organization stands? Schedule a complimentary AI Identity Readiness Sprint scoping call with BeyondID →